It sounds like a movie plot, but it’s terrifyingly real: a finance worker was tricked into sending $25 million after a video call with deepfake versions of his senior colleagues. This isn’t a one-off event. It’s a serious warning about the new face of digital fraud. Synthetic media is dismantling the trust we place in online communication. When you can’t be sure you’re speaking to a real person, how can you do business? We’ll show you how these threats work and, more importantly, explain the critical role of deepfake detection in securing your organization.

Key Takeaways

- Deepfakes directly threaten business operations: They enable sophisticated fraud, spread damaging misinformation, and violate user privacy, turning digital trust into a critical vulnerability.

- Fighting AI requires smarter AI: Effective detection systems find what humans miss by analyzing digital artifacts, confirming real-time presence with liveness checks, and using biometrics to verify a person is real.

- A strong defense is an adaptive one: Don’t rely on a single tool; the best strategy layers different detection technologies, includes continuous updates to counter new threats, and combines automated analysis with human oversight.

What Is a Deepfake and How Are They Made?

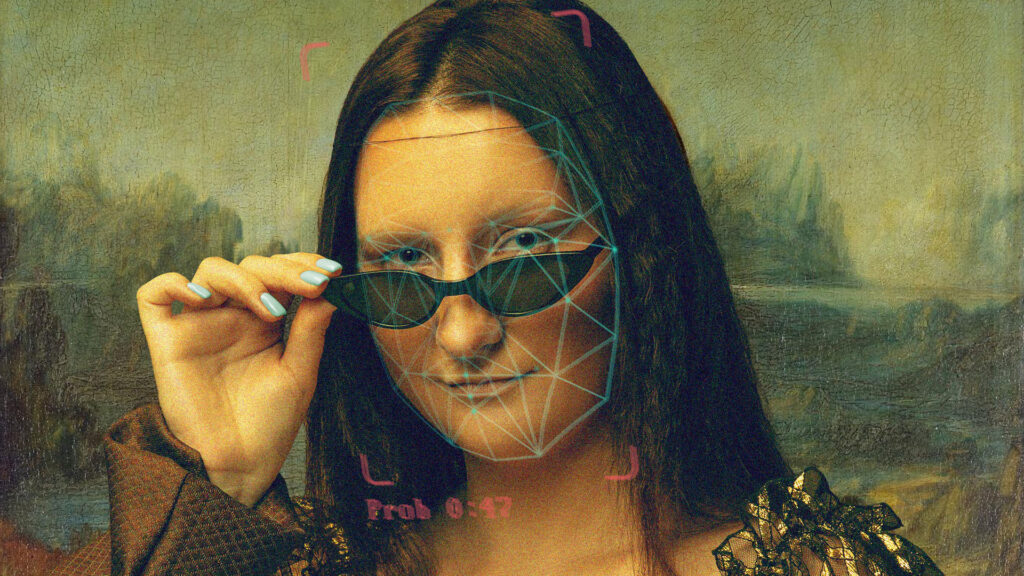

You’ve probably heard the term “deepfake,” but what does it actually mean? At its core, a deepfake is a piece of synthetic media where a person’s likeness has been replaced with someone else’s. As the Walton College at the University of Arkansas explains, “Deepfakes are fake videos or audio created using artificial intelligence (AI) that make it seem like someone is saying or doing something they aren’t.” The name itself is a blend of “deep learning” (a type of AI) and “fake.”

These aren’t your average photo edits. Deepfakes are generated by sophisticated algorithms that have been trained on massive amounts of data. The result can be a video or audio clip that is incredibly difficult to distinguish from the real thing. Understanding the mechanics behind these creations is the first step toward building a strong defense against them. By knowing how they are made, we can get better at spotting the subtle flaws that give them away and protect our platforms from manipulation.

Understanding the Tech That Powers Deepfakes

The magic behind a deepfake lies in complex artificial intelligence models. These systems analyze thousands of images and videos of a target person to learn their unique facial expressions, mannerisms, and voice patterns. The AI then uses this knowledge to map the target’s likeness onto a different person in a source video, creating a seamless and often convincing fake. The technology has become so effective that it requires equally advanced methods to spot the deception. As the security experts at Pindrop note, “deepfake detection tools look for tiny mistakes in faces, lighting, sounds, or pixels to tell real content from fake.” These almost invisible clues are often the only sign that you’re not looking at a real person.

Popular Methods for Creating Deepfakes

The most common method for creating deepfakes involves a clever AI architecture called a Generative Adversarial Network, or GAN. Think of a GAN as a two-player game between a pair of neural networks. One network, the “generator,” works to create the fake image or video. The second network, the “discriminator,” acts as a detective, trying to determine if the content is real or fake. This process is repeated thousands of times, with the generator getting progressively better at fooling the discriminator. This adversarial process is why deepfakes have improved so rapidly. As Pindrop puts it, this technique involves “two neural networks contesting with each other to create realistic images or videos.”

What Makes Them So Believable?

Deepfakes are convincing because the AI behind them is designed to replicate the tiny, almost imperceptible details that we associate with human authenticity. This includes everything from the way a person blinks to the subtle inflections in their voice. As the technology advances, the barrier to entry is also getting lower, making it easier for anyone to create this type of synthetic media. This accessibility is a major reason why deepfakes pose such a significant threat. They “are becoming more advanced and easier to create, leading to serious risks for people, companies, and even countries.” This combination of realism and accessibility is what makes them a powerful tool for everything from spreading false information to committing financial fraud.

Why Is Deepfake Detection So Important?

The rapid rise of convincing deepfakes presents a direct threat to the trust that underpins our digital world. From personal conversations to global commerce, the assumption that we are interacting with a real person is fundamental. When that certainty is gone, the systems we rely on for communication, business, and security begin to falter. The stakes are incredibly high, affecting everything from brand reputation to financial stability. Understanding the specific ways deepfakes erode trust makes it clear why effective detection is no longer a luxury, but a necessity for any modern enterprise.

The Alarming Rise of Deepfakes in Numbers

This isn’t a distant, theoretical threat; the data shows that deepfakes are already a widespread problem. The numbers are genuinely startling. One detection tool has already identified over 50,000 deepfakes, with some of the highest concentrations found where trust is most critical. For example, a staggering 89% of flagged social media profiles and 67% of suspicious video calls were found to be deepfakes. This highlights a massive vulnerability for platforms built on user identity and interaction. The issue extends across the board, with significant numbers of deepfakes also found in financial documents and even news media. As the technology behind them becomes more accessible, these numbers will only climb, making it crucial for people to get better at spotting manipulated content and for businesses to deploy systems that can verify real human presence.

How Deepfakes Fuel Misinformation and Manipulation

Deepfakes are potent tools for spreading false narratives at an unprecedented scale and speed. Because they are becoming easier to create, they can be used to generate fake news, manipulate public opinion, and launch sophisticated online attacks. For businesses, the danger is immediate. Imagine a deepfake video of your CEO announcing a phony product recall or a fabricated customer testimonial trashing your services. The financial impact of misinformation can be devastating, causing stock prices to plummet and erasing customer confidence overnight. In an environment where seeing is no longer believing, platforms need a reliable way to verify the human source behind the content.

The Erosion of Personal Privacy and Consent

At its core, the deepfake problem is a human problem. A staggering amount of deepfake content involves the creation of non-consensual material, representing a profound violation of individual privacy and autonomy. This raises serious ethical questions for any organization operating online. Even when used for seemingly harmless purposes like marketing, creating a digital replica of a person without their explicit and informed consent is a major liability. It breaks the trust you have not only with the individual being depicted but also with your audience, who may question the authenticity of all your communications. Upholding digital consent is a cornerstone of building a trustworthy online presence.

The Rise of Deepfake-Powered Fraud

Deepfakes are a game-changer for financial crime, enabling fraud that is nearly impossible for a person to spot. In one high-profile case, a finance worker was tricked into transferring $25 million after attending a video call with what he thought were his senior colleagues, but they were all deepfakes. These AI-generated videos and audio clips can bypass security protocols that rely on human verification. Scammers can use them to impersonate executives, authorize fraudulent payments, or gain access to sensitive systems. This form of synthetic identity fraud represents a direct assault on a company’s assets and operational integrity, proving that digital verification is critical.

A New Tool for Harassment and Blackmail

The power to create realistic fake media can easily be weaponized for personal and professional attacks. Deepfakes can be used to place individuals in compromising situations, fabricate inflammatory statements, or create false evidence for blackmail. For a business, this could mean an employee being targeted to damage their reputation or a leader being impersonated to spread internal discord. For online platforms, this creates a massive content moderation crisis. Failing to detect and remove malicious deepfakes not only exposes users to harm but also erodes the safety and trust of the entire community, leaving the platform vulnerable to legal and reputational damage.

Beyond Business: Where Deepfake Detection Is Used

While the business implications of deepfakes are massive, their impact stretches far beyond corporate fraud and brand reputation. This technology is reshaping industries and challenging the very nature of truth in our daily lives. From the courtroom to the classroom, the need to distinguish real from fake has become a critical function. Understanding these diverse applications highlights the universal importance of deepfake detection. It’s not just about protecting a company’s bottom line; it’s about safeguarding the integrity of our institutions, our educational systems, and even our personal relationships from the corrosive effects of digital deception.

Protecting Journalism and Public Discourse

In an era where misinformation can spread like wildfire, deepfakes represent a serious threat to a free press and informed public debate. They can be used to create fabricated quotes from world leaders, stage fake events, or discredit journalists with slanderous content. For news organizations, the ability to verify the authenticity of video and audio sources is essential to maintaining credibility. Deepfake detection tools give journalists a way to vet user-generated content and protect their audiences from false narratives. This technology acts as a crucial line of defense, helping to preserve the trust between the media and the public it serves.

Enhancing Education and Media Literacy

As synthetic media becomes more common, teaching people how to critically evaluate what they see online is more important than ever. Deepfake detection is becoming a key component of media literacy education. Initiatives like MIT’s “Detect DeepFakes” project are designed to help the public learn how to spot AI-generated fakes, turning a technological threat into a teachable moment. By integrating detection tools and principles into educational programs, we can equip the next generation with the skills needed to identify manipulation and become more discerning consumers of digital content, fostering a more resilient and informed society.

Ensuring Evidence Integrity in the Legal System

The legal system is built on a foundation of verifiable evidence, a foundation that deepfakes are poised to shake. The possibility of fabricated video or audio evidence being presented in court could lead to wrongful convictions or acquittals, fundamentally undermining the justice process. To counter this, legal professionals are beginning to use advanced detection services to authenticate digital evidence. Companies like Facia offer tools that can analyze video files submitted in court to confirm they haven’t been manipulated. This ensures that legal decisions are based on genuine facts, not sophisticated forgeries.

Safeguarding Personal and Family Communications

The threat of deepfakes hits close to home, with scammers now using the technology to impersonate loved ones. Imagine receiving a frantic audio message from a family member asking for money, where their voice has been perfectly cloned by AI. This is already happening. Free online tools are emerging that allow individuals to check suspicious audio clips or videos, offering a quick way to verify if they are communicating with a real person or a fake. This application of detection technology provides a crucial layer of personal security, helping protect families from emotional manipulation and financial scams.

How to Spot a Deepfake: Manual Detection Tips

While sophisticated AI is often needed to catch the most advanced deepfakes, many still contain subtle flaws that the human eye can pick up on—if you know what to look for. Developing a critical eye for digital content is a valuable skill for anyone who spends time online. It’s about moving from passive consumption to active observation. By paying close attention to the small details that AI often gets wrong, you can become better at identifying potential fakes. These manual checks aren’t foolproof, but they provide a solid first line of defense against obvious manipulation and help you question the authenticity of what you see.

Look for Unnatural Facial and Skin Details

AI is good, but it’s not perfect at recreating the nuances of human skin and facial structure. Look closely at the edges of the face. Do you see any blurring or distortion where the face meets the hair or neck? Sometimes the skin texture appears too smooth or too waxy, lacking the natural pores and blemishes of a real person. Shadows can also be a giveaway; check if they fall correctly according to the lighting in the environment. An odd shadow under the nose or a lack of reflection in the eyes can be a sign that the face has been digitally grafted onto the video.

Check the Eyes, Eyebrows, and Glasses

The eyes are often called the window to the soul, and in the case of deepfakes, they can be a window to the truth. AI models sometimes struggle with natural eye movement and blinking. A person in a video who rarely blinks, or blinks in an unnatural, stuttered way, could be a red flag. If the person wears glasses, look for glare. Does the reflection on the lenses change realistically as their head moves? Unnatural glare or a complete lack of it can indicate a fake. Similarly, eyebrows that look pasted on or don’t match the person’s hair texture are another detail to watch for.

Pay Attention to Blinking and Lip Syncing

Synchronizing lip movements with audio is one of the most challenging parts of creating a convincing deepfake. Watch the person’s mouth carefully as they speak. Do their lip movements perfectly match the words you’re hearing? Awkward, jerky, or poorly synced mouth movements are a classic sign of a deepfake. The same goes for blinking. Real people blink at a regular, almost subconscious rate. An AI-generated person might blink too frequently or not at all for long stretches. These small, involuntary actions are incredibly difficult for algorithms to replicate perfectly, making them a reliable place to look for signs of digital manipulation.

How Does Deepfake Detection Technology Work?

It might feel like we’re losing the battle against deepfakes, but the truth is, the technology to fight back is getting smarter every day. The same artificial intelligence that creates these convincing fakes is also our best weapon for detecting them. Think of it as fighting fire with fire. Instead of relying on the human eye, which can be easily fooled, detection systems use powerful algorithms to catch the subtle mistakes and digital artifacts that deepfakes leave behind.

These systems work by analyzing everything from individual pixels and audio frequencies to the underlying code of a file. They are trained on vast libraries of both real and synthetic media, learning to recognize the telltale signs of digital manipulation. This isn’t about a single magic bullet. Instead, effective detection relies on a combination of different techniques, each looking for a specific type of clue. By layering these methods, platforms can build a robust defense that confirms whether they are interacting with a real person or a sophisticated fake. It’s a constant game of cat and mouse, but with the right tools, we can stay one step ahead.

Using AI to Catch AI-Generated Fakes

At the heart of deepfake detection is artificial intelligence. Specialized systems use AI disciplines like computer vision and audio analysis to scan content for flaws that are nearly impossible for a person to spot. These models are trained on enormous datasets containing thousands of hours of both authentic and deepfake videos. Through this training, the AI learns to identify the microscopic inconsistencies and patterns that give fakes away. Because the models are constantly learning from new examples, they can adapt over time, helping security platforms keep up with the latest manipulation techniques used by bad actors.

Spotting the Telltale Visual and Audio Flaws

So, what exactly are these AI models looking for? They’re hunting for tiny errors that slip through during the deepfake creation process. Visually, this could be anything from unnatural blinking patterns or a lack of emotion to weird shadows and inconsistent lighting between the face and the background. The AI might also spot strange reflections in a person’s eyes or areas where the edge of a face looks blurry or distorted. On the audio side, detection tools listen for unnatural pauses, a robotic tone, or background noise that doesn’t match the visual environment. These small but significant clues act as a clear signal that the content isn’t genuine.

Uncovering the Digital Fingerprints of AI

Every tool leaves a mark, and the AI models used to create deepfakes are no exception. Some detection methods focus on finding the unique digital “fingerprints” that generative models leave on the content they produce. This technique, sometimes called GAN fingerprinting, works by identifying recurring patterns or artifacts that are specific to the algorithm that created the deepfake. It’s like a forensic analyst tracing a tool mark back to a specific instrument. By recognizing these digital signatures, a system can determine not only that a video is a fake but sometimes even which type of software was used to make it.

Proving Liveness with Interactive Challenges

Instead of just analyzing a video after it’s been created, some of the most effective methods verify a person’s identity in real time. A challenge-response system does exactly that by asking a user to perform a simple, live action. For example, a platform might ask you to turn your head to the left, smile, or repeat a random phrase. Most deepfakes are pre-rendered videos and can’t react to spontaneous commands. This simple test, often called a liveness check, is an incredibly powerful way to confirm that you’re interacting with a living, breathing person and not a digital puppet.

The Role of Biometrics in Identity Verification

Taking live verification a step further, behavioral biometrics analyze the unique ways a person moves, speaks, and expresses themselves. This method isn’t just checking if you can follow a command; it’s confirming that your behavior is authentically human. The system might analyze your subtle facial movements, the cadence of your voice, or even the way you blink to create a unique profile. Because these biological and behavioral traits are incredibly difficult to replicate with AI, they provide a strong layer of defense. This approach focuses on verifying the human signal itself, ensuring the person on the other side of the screen is exactly who they claim to be.

What Makes Deepfake Detection So Difficult?

Spotting deepfakes isn’t as simple as looking for a glitchy video or a robotic voice. The technology behind these fabrications is evolving at a breakneck pace, creating a significant challenge for businesses that rely on digital trust. As generative AI becomes more sophisticated, the tells we once relied on are disappearing, making automated, intelligent detection more critical than ever. Several key factors make this a particularly tough problem to solve, turning the effort into a constant technological race where the stakes are incredibly high. Understanding these hurdles is the first step toward building a more resilient defense.

The AI Cat-and-Mouse Game

The fundamental challenge in deepfake detection is that you’re fighting fire with fire. As soon as a new detection method is developed, deepfake creators find ways to get around it. This creates a constant cycle of innovation on both sides. The old advice, like looking for unnatural blinking or strange artifacts, no longer holds up because the AI used to create deepfakes has gotten too good. Even experts now struggle to spot them without advanced tools. This escalating arms race means that any effective next-gen deepfake detection strategy can’t be static; it must learn and adapt continuously to keep up with emerging threats.

Major Research Efforts like the DFDC

The scale of this problem has sparked major collaborative research initiatives, as tech leaders recognize that no single company can solve this alone. A great example is the Deepfake Detection Challenge (DFDC), which put up a $1 million prize pool to encourage researchers to develop new ways to spot synthetic media. This wasn’t just an academic exercise; it was a call to arms for the brightest minds in AI to help build better defenses against a growing threat. While these efforts have pushed the technology forward—with top models reaching over 82% accuracy—they also highlight the complexity of the problem. The results show that even with massive investment, detection is not a solved issue, reinforcing the need for continuous innovation and adaptive security measures.

Why a Lack of Data Hinders Detection

Effective AI detection models learn by analyzing massive datasets containing both real and fake content. The problem is, the quality and diversity of this training data are paramount. As new deepfake generation techniques appear, existing datasets can quickly become outdated. A model trained to spot fakes from last year might be completely blind to the methods being used today. Building and maintaining a comprehensive, up-to-date deepfake detection challenge dataset is a resource-intensive process. Without a steady stream of relevant examples, detection models can’t learn to identify the subtle fingerprints of the latest generative AI, leaving them a step behind the fraudsters.

Detecting Fakes Across Video, Audio, and Images

A deepfake isn’t just one thing; it can be a video, a still image, a voice recording, or a combination of all three. A detection model that excels at analyzing video footage might be useless for identifying a synthetic voice in an audio clip. Each media type has its own unique characteristics and potential artifacts. This means a robust deepfake detection solution can’t be a one-size-fits-all tool. Instead, it requires a multi-layered approach, using different models and techniques tailored to the specific type of content being analyzed. This complexity makes creating a single, comprehensive detection platform a significant technical challenge for any organization.

When Fakes Are Mixed with Real Content

Attackers are becoming more strategic, often blending real and synthetic media to create hybrid fakes that are much harder to flag. For instance, a fraudster might insert a deepfaked face into an otherwise authentic video or subtly manipulate a few words in a genuine audio recording. These partial fakes often bypass detectors trained to look for fully fabricated content. Because parts of the media are real, the usual digital fingerprints of AI generation are less obvious. These hybrid attacks exploit the gray areas in detection, making it difficult for automated systems to confidently distinguish between what’s authentic and what’s been manipulated.

Why One Detector Can’t Catch Every Fake

Perhaps the biggest hurdle in machine learning is generalization: the ability of a model to perform accurately on new, unseen data. A detection model might be perfectly tuned to identify all the deepfakes in its training set, but how will it handle a fake created with a brand-new algorithm it has never encountered before? This is a critical issue, as attackers are constantly developing novel techniques. For a detection system to be truly effective in the real world, it must be able to generalize from what it has learned and identify the fundamental patterns of manipulation, not just the specific flaws of past deepfake methods.

Your Toolkit for Deepfake Detection

Knowing how to spot a deepfake is one thing, but having the right tools makes all the difference. The good news is that you don’t have to rely on your eyes and ears alone. A growing number of services are available to help you verify digital content, ranging from free online checkers for quick scans to sophisticated enterprise systems for comprehensive protection. The key is to find the right tool for your specific needs, whether you’re a journalist verifying a source or a business protecting your customer onboarding process.

Top Platforms for Professional-Grade Detection

For organizations where trust is non-negotiable, professional detection platforms offer a powerful line of defense. These services are designed for entities like newsrooms, financial institutions, and government agencies that require a high degree of accuracy. They use advanced AI models to analyze video, audio, and images for subtle signs of manipulation that are nearly impossible for humans to catch. For example, platforms like Facia provide AI-powered tools that help businesses and public sector organizations protect themselves from scams and misinformation campaigns fueled by synthetic media. These platforms are an essential investment for anyone whose reputation or security depends on authentic content.

Free Tools for a Quick Online Check

If you just need to run a quick check on a suspicious image or video you’ve come across, a free online tool can be a great starting point. Several websites allow you to upload a file and get an instant analysis. These tools use AI to scan for common deepfake artifacts and can give you a quick read on whether a piece of content might be manipulated. A service like DeepfakeDetection.io offers a simple interface for checking images, videos, and even voice recordings. While these free options are incredibly accessible, it’s wise to remember they may not offer the same level of accuracy or security as a professional service, making them best for personal or low-stakes use.

What to Expect from a Free Tool

When you use a free online deepfake detector, you can expect a pretty straightforward experience. These tools are built for accessibility, typically allowing you to upload an image, video, or audio file directly from your computer without needing to create an account or provide any personal information. The analysis is usually very fast, often delivering a result in just a few seconds. This speed and lack of friction make them a great option for anyone who wants to quickly verify a piece of content they’ve encountered online. It’s a simple, no-commitment way to get a second opinion on a suspicious file without any technical know-how.

Understanding the Results and Reports

Once the tool has analyzed your file, it will typically present the findings in a clear, easy-to-understand format. You’ll likely see a score or percentage that indicates the probability of the content being a deepfake. Beyond that simple score, many services also provide a summary or a more detailed report that you can download or share. This report might highlight specific areas of the image or video that flagged the system’s suspicion. These results are not only useful for judging the authenticity of a single file but can also be a great learning tool, helping you get better at recognizing the subtle signs of manipulation in the future.

Enterprise Solutions for Company-Wide Protection

For businesses, protecting systems and customers from AI-driven fraud requires a more robust and integrated approach. Enterprise-level solutions go beyond one-off checks by providing continuous, scalable protection that adapts to new threats. These systems are built to handle high volumes of data and integrate directly into your existing security infrastructure. Companies like Daon offer AI-driven tools that use biometrics and machine learning to detect deepfakes and other forms of identity fraud in real time. These solutions are designed to evolve, constantly learning from new data to stay ahead of the increasingly sophisticated methods used by bad actors.

Confirming Human Presence at Scale

For large platforms, the challenge isn’t just detecting a single deepfake; it’s about verifying human presence for millions of interactions every day. This is where automated, real-time verification becomes essential. Instead of analyzing a file after the fact, the most effective systems confirm a person’s identity live. This is often done through a simple challenge-response test, sometimes called a liveness check. A platform might ask a user to perform a straightforward, spontaneous action like turning their head or smiling. Because most deepfakes are pre-rendered videos, they can’t react to these commands. This is the core idea behind technologies designed to confirm human presence at scale, using a brief, frictionless interaction to ensure there’s a living, breathing person behind the screen and not a digital puppet.

Taking this a step further, advanced systems also use behavioral biometrics to analyze the unique ways a person moves and expresses themselves. It’s not just about *if* you can follow a command, but *how* you do it. The technology analyzes subtle facial movements, the cadence of a voice, or even blinking patterns to confirm that the behavior is authentically human. Because these biological and behavioral traits are exceptionally difficult to replicate with AI, they provide a strong layer of defense. By layering these techniques—combining liveness checks with biometric analysis—platforms can build a much more robust defense. This multi-faceted approach focuses on verifying the human signal itself, providing strong, continuous assurance that the person on the other side is exactly who they claim to be.

How to Integrate Detection into Your Workflow

The most effective defense against deepfakes isn’t just about having a tool; it’s about making detection a seamless part of your daily operations. Think about where your organization is most vulnerable. You can embed AI-based detection directly into your call center software to flag synthetic voices or add it to your video conferencing platforms to prevent impersonation. By integrating detection into the systems you already use, you create an active shield rather than a passive checkpoint. For even stronger security, you can layer deepfake detection with other verification methods, like voice or face recognition, to build a multi-faceted defense that is much harder to penetrate.

How to Build an Effective Defense Against Deepfakes

Building a solid defense against deepfakes isn’t about finding a single piece of software that solves the problem forever. Instead, it’s about creating a resilient and adaptive security posture. Because the technology used to create fakes is constantly improving, our methods for spotting them must evolve right alongside it. A truly effective strategy is a comprehensive one, blending advanced technology with smart processes and human oversight.

Think of it less like building a wall and more like creating an immune system for your platform. It needs multiple lines of defense that work together, the ability to learn from new threats, a clear set of rules to operate by, and the wisdom of human judgment to guide it. This approach prepares you not just for the deepfakes we see today, but for the more sophisticated versions that are sure to come. By focusing on these four key areas, you can build a framework that protects your systems, your decisions, and the communities you serve.

Why a Layered Defense Is Your Best Bet

Relying on a single method to spot deepfakes is a risky bet. As one source notes, even advanced detection methods “often struggle with real-world, high-quality fakes, making a layered, multi-layered defense approach necessary.” A defense-in-depth strategy is your best bet. This means combining different technologies that can catch what others might miss. For example, you can pair AI content analysis, which scans for digital artifacts, with biometric verification that confirms a user’s liveness. You might also add behavioral analysis to the mix, which looks at how a user interacts with your platform. Each layer acts as a checkpoint, creating a much stronger and more reliable verification process than any single tool could provide on its own.

The Importance of Continuous Monitoring

The fight against deepfakes is a fast-moving target. As soon as a new detection method is developed, creators of fakes are already working on ways to get around it. This is why your defense can’t be a “set it and forget it” solution. Your detection systems need to be “smarter, faster, and built with AI at their core, constantly updating to keep up with new threats.” When choosing a detection partner, look for one that is committed to continuous research and model updates. An effective defense requires real-time monitoring that can adapt as new manipulation techniques emerge, ensuring your platform is protected against the threats of tomorrow, not just the ones from yesterday.

Creating Ethical Guidelines for AI Use

Technology is only part of the solution. You also need a clear plan for how your organization will handle deepfake incidents. Since this is a relatively new challenge, it’s critical to “set up rules and guidelines now before it becomes too hard to control.” This means creating an internal playbook that outlines the steps to take when a deepfake is suspected or confirmed. Who is responsible for investigating? How are decisions escalated? What are the protocols for communicating with affected users? Establishing these ethical frameworks not only prepares your team to act decisively but also builds trust with your audience by showing you are handling this threat responsibly and transparently.

The Power of Education and Media Literacy

Automated detection is essential, but it’s only half the battle. The strongest defense combines powerful technology with an educated and aware team. Think of it as a crucial human layer in your security strategy. By investing in media literacy training, you equip your employees to act as a firewall against manipulation. This isn’t just about teaching them to spot a glitchy video; it’s about fostering a culture of healthy skepticism. When your team is trained to question the authenticity of unexpected requests or unusual communications, they are far less likely to fall for a deepfake-powered social engineering attack. As experts from the Poynter Institute emphasize, developing these critical thinking skills is a vital part of staying secure in a challenging digital landscape.

Always Keep a Human in the Loop

While AI is essential for detecting deepfakes at scale, it shouldn’t operate in a vacuum. The most robust security systems combine automated analysis with human expertise. As security experts advise, “combining AI with human checks, biometrics, and device signals…will create the strongest defenses.” A human-in-the-loop model allows AI to do the heavy lifting by flagging suspicious content 24/7, but it reserves the final judgment for a trained human analyst. This approach is crucial for nuanced or high-stakes cases where context is key. It provides a vital safeguard against false positives and ensures that critical decisions are made with both technological precision and human understanding.

Related Articles

Frequently Asked Questions

Can’t I just train my team to spot deepfakes visually? While it’s always smart to encourage critical thinking, relying solely on human observation is no longer a viable strategy. Early deepfakes had obvious flaws like weird blinking or blurry edges, but the technology has advanced far beyond that. Today’s fakes are incredibly sophisticated, and the AI that creates them is specifically designed to fool the human eye. Automated detection systems are necessary because they can analyze pixels, audio frequencies, and digital artifacts in ways we simply can’t, catching inconsistencies that are invisible to us.

What’s the difference between a deepfake and a regular edited video? The main difference comes down to artificial intelligence. A traditionally edited video might involve cutting scenes, adding special effects, or changing the background, but the people in it are still the original people. A deepfake uses AI, specifically deep learning models, to completely replace or generate a person’s face or voice. It learns someone’s unique expressions and mannerisms from thousands of images and then maps them onto another person, creating entirely new, synthetic content that never actually happened.

Are deepfakes only a problem for celebrities and politicians? Not at all. While high-profile cases involving public figures get the most attention, the threat to businesses is very real and growing. Scammers are using deepfake technology to impersonate executives on video calls to authorize fraudulent wire transfers or to fake customer identities during onboarding processes. They can also be used to create fake testimonials or spread misinformation about your company. Any organization that relies on digital communication and verification is a potential target.

If the technology is always changing, how can any detection tool stay effective? You’ve hit on the central challenge. A static detection tool will quickly become obsolete. That’s why the best defense systems are built on AI that is constantly learning and evolving. Effective solutions don’t just look for a fixed set of flaws; they are continuously trained on new data, including the latest deepfake generation methods. This allows them to adapt and identify the fingerprints of new manipulation techniques as they emerge, ensuring the defense keeps pace with the threat.

What is the most important first step my business can take to protect itself? The most crucial first step is to shift your mindset from passively analyzing content to proactively verifying human presence. Instead of just asking “is this video fake?” after the fact, start asking “is there a real, live person here right now?” during critical interactions. Implementing a real-time liveness check, which asks a user to perform a simple, spontaneous action, is a powerful starting point. This simple test is incredibly difficult for a pre-made deepfake to pass and establishes a strong foundation for a layered security strategy.